Happy New Year from The Speedlighter!

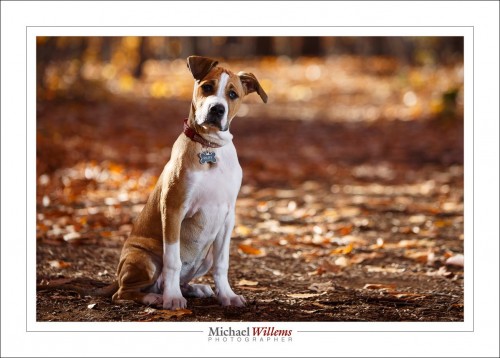

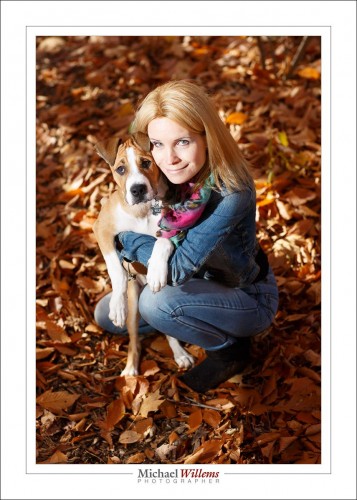

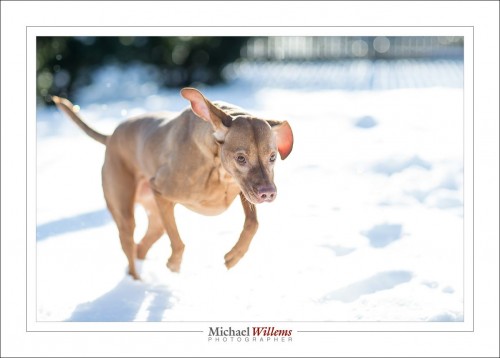

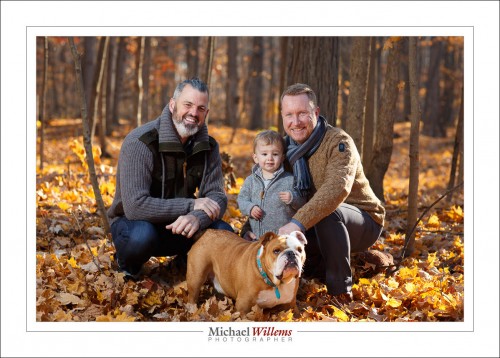

As for your 2017 resolutions, how about this one: Make this the time you finally perfect those skills you always wanted to hone! Skills that allow you to quickly and easily do pictures like the ones I took over the last couple of weeks. These include a few animal (and animal-plus-owner) pictures:

All those were made with the 85mm f/1.2 lens, and used a single speedlight in an umbrella.

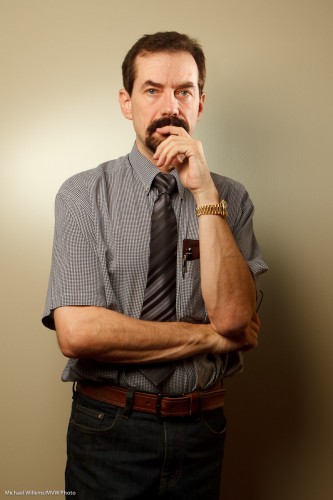

But I also did an executive portrait, just yesterday:

Do you see the difference between the two above? For the first one, I did not want to show the outside (boring, homes). Easy, so the picture,like almost all my pictufes, was stright out of the camera.

For the second one, however, I did want to show the blue sky. So I exposed that one less (using the magic Outdoors Recipe–one of the things you will learn if you turn up). Both used flash, of course; fired by Pocketwizards and with their power set manually. The second one used much more flash power because I was using low ISO and small aperture to kill the outside light. I also had to, therefore, brighten the Apple logo in post-production.

I would almost call that last one an environmental portrait.

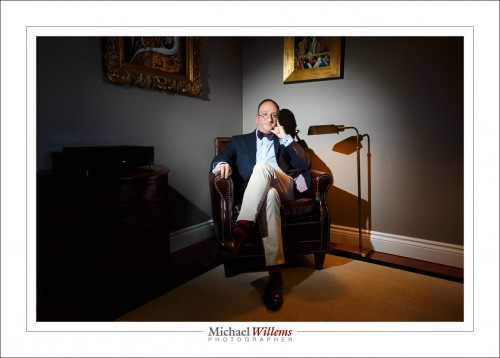

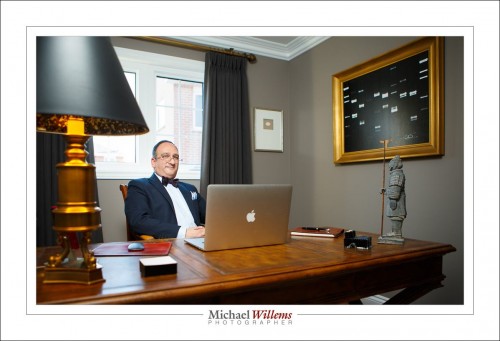

The next ones are certainly environmental portraits:

The one above used a 24-70mm lens and a speedlight with a Honl Photo 1/8″ grid. The one below, a 16-35mm wide angle lens and a speedlight with an umbrella:

What do they all have in common? Simplicity, good exposure, and a thorough knowledge of the technical necessities.

You can learn this too. Why not do it? I have several great opportunities coming up!

- Jan 4 and Jan 8: “Wide, Not Deep”: In-depth Special Topics. In Brantford, just 20 minutes west of Hamiltopn.

- Jan 28: Learn Pro Flash In Half A Day. In Toronto.

- Any time: private, or small group, coaching. See http://learning.photography.

All of these are excellent learning opportunities, and will broaden and deepen your knowledge significantly. Hope to see you there and then.